DO BETTER ,

BE THE

BEST

OVERSEAS OFFICESERVICEHISTORYACHIEVEMENTSOUR NETWORKALLIANCE

Analyze the status quo without being complacent.

Never stop improvements, to steadily advance the future.

Bring integrated marketing from a strategy to execution, to a real market.

IREP will be your marketing partner for your challenges.

OVERSEAS OFFICE

We provide our best solutions globally. Oversea offices

Irep Inc. is an award-winning global digital marketing agency based in the San Francisco Bay Area.

Irep China is an advertising agency specializing in digital marketing and offline marketing campaigns for the Japanese market.

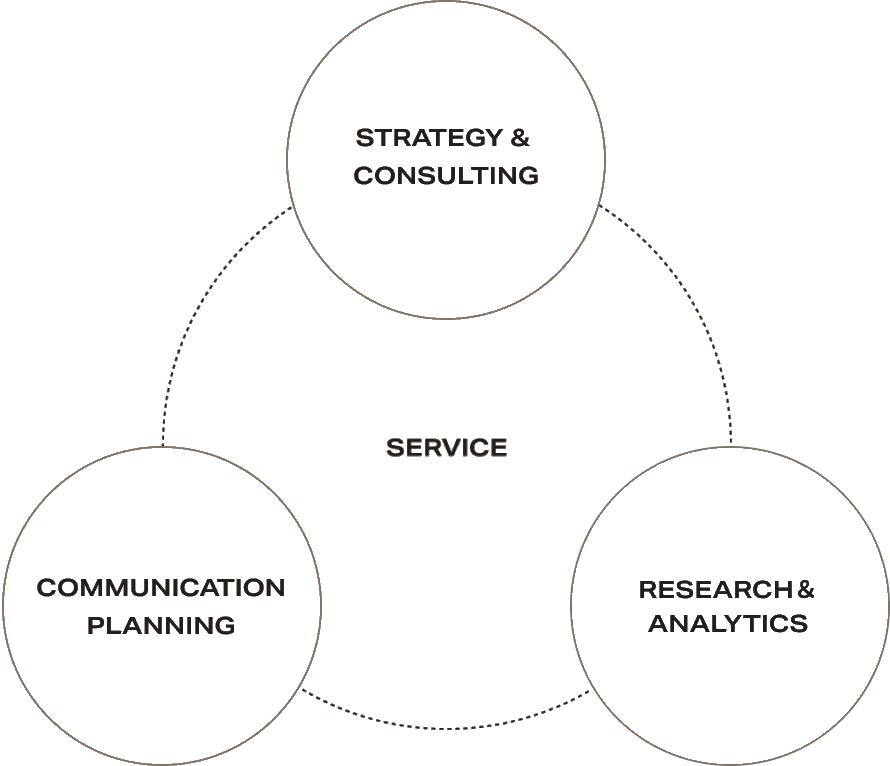

SERVICE

Connecting your business and the world

with every approach in the integrated marketing.

Every solution for your marketing challenges, handpick what truly needed to achieve your objectives.

HISTORY

Our history, since its founding in 1997,

the born of IREP in 2000, and many more to come.

- 1997

- Aspire Co., Ltd.

founded by Masayuki Takayama

- 2000

- The company name was changed to IREP Co., Ltd. ("I Rep" is derived from a Japanese play on words meaning'my agent')

- 2002

- Became the first Google Adwords agency in Japan

- 2003

- Certified by Overture as a "Yahoo! Official Agent"

- 2013

- Established Pt. Digital Marketing Indonesia in Jakarta

- 2014

- Opened IREP Beijing office

Digital Marketing Vietnam Corporation consolidated

- 2016

- Established Consortiumablished D.A.Holdings Co., Ltd., a joint holding company with Digital Advertising Consortium Co.. Ltd.

- 2019

- Established Irep Inc.

ACHIEVEMENTS

Experties, proven by many partners

Irep has pursued its expertise in the market, and been a leader with top-class share in programmatic advertising.

- Won the Best Prize in Google Premier Partner Awards for 3 categories (as of 2020 Mar)

- 6 Stars Sales Partner Yahoo! Marketing Solution

- Meta Agency Partner

- Criteo Partner Status Platinum

OUR NETWORK

HAKUHODO DY GLOBAL NETWORK

The Hakuhodo DY Group has a global network of 419 subsidiaries and affiliates, with 25,000+ employees and 29+ countries/areas.

*As of March 2022

ALLIANCES

Hakuhodo DY Group Companies

Contact Us

Inquiries? We'll get in touch with you very soon.